The Hidden Reality of System Limits

What’s tricky is that many systems are already closer to that limit than people think. They run fine day to day, everything feels smooth, users don’t complain. But that’s under normal conditions. The moment something changes—more users, more requests, even just a sudden spike—the cracks start to show.

I’ve seen this happen in pretty ordinary situations. A campaign goes live, traffic jumps, and suddenly the app gets slow. At first it’s just a bit of lag, then pages stop loading, and eventually you get errors everywhere. No attacker involved, just too much demand, created by someone on purpose.

Denial-of-Service: Forcing the Collapse

That’s basically what a DoS attack is. Instead of waiting for organic traffic to grow, someone forces it. Floods the system with requests, sometimes from multiple sources, sometimes just from a few machines if the target is weak enough. And if the system isn’t built to absorb that kind of pressure, it doesn’t stand a chance.

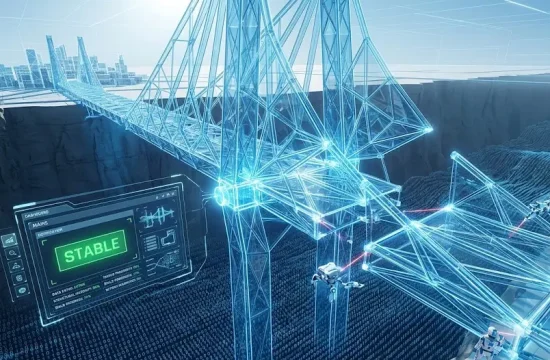

“This is where performance becomes a security issue, even if people don’t label it that way. A system with low capacity, poor scaling, or inefficient processes is easier to take down. It’s like a small bridge.”

And the thing is, teams don’t always design for worst-case scenarios. They design for what they expect. Average traffic, maybe a bit more. Which is reasonable, no one wants to overspend on infrastructure “just in case”. But the gap between average and extreme is where problems live.

When Safeguards Fail Under Stress

Once the system starts struggling, things get messy fast. It’s not just that it slows down. Behavior changes. Requests start timing out, retries pile up, queues grow. Sometimes the system tries to compensate and ends up making things worse, like retrying failed operations over and over, adding even more load.

And under that kind of stress, safeguards don’t always behave as expected. You might have validation steps, logging, security checks… but if the system is overwhelmed, some of those processes get delayed or skipped. Not by design, but because everything is fighting for resources. CPU, memory, connections. Something has to give.

The Architectural Weak Link

There’s also the architectural side of it, which people tend to underestimate. You can have a decent server and still be vulnerable if everything depends on a single point. One database, one service, one bottleneck. Once that piece gets overloaded, the whole system feels it.

I remember a case where the database was the weak link. The app itself could handle more traffic, but every request needed a database call. Once the database slowed down, everything backed up behind it. Users saw a “slow app”, but the real issue was deeper. That’s the kind of thing attackers look for, even if they don’t know the details. They just push until something breaks.

The Scaling and Monitoring Gap

Scaling helps, but it’s not a magic fix. A lot of systems say they “scale”, but in practice there’s a delay. New resources take time to spin up, configurations don’t always react instantly. So there’s a window where the system is still exposed. If the spike hits fast enough, it can overwhelm everything before scaling catches up.

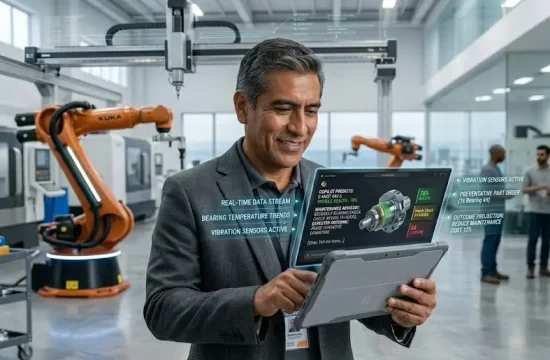

Monitoring can make a big difference, but only if it’s actually used well. It’s not just about having dashboards, it’s about noticing patterns early. A sudden increase in requests, unusual traffic from certain regions, things like that. If you catch it early, you might have time to react. If not, you’re just watching the system go down in real time.